Kubernetes是Google开源的一个容器编排引擎,它支持自动化部署、大规模可伸缩、应用容器化管理。在生产环境中部署一个应用程序时,通常要部署该应用的多个实例以便对应用请求进行负载均衡。

在Kubernetes中,我们可以创建多个容器,每个容器里面运行一个应用实例,然后通过内置的负载均衡策略,实现对这一组应用实例的管理、发现、访问,而这些细节都不需要运维人员去进行复杂的手工配置和处理。

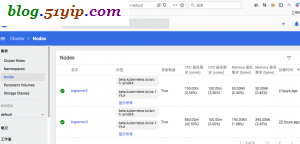

一,服务器介绍

10.0.40.222 bigserver2 worker 10.0.40.193 bigserver3 master

二,设置hostname

1,修改hostname

# hostname bigserver2

2,设置hosts文件

# cat <<EOF >>/etc/hosts 10.0.40.222 bigserver2 10.0.40.193 bigserver3 EOF

三,关闭防火墙,selinux,交换分区

# systemctl stop firewalld # systemctl disable firewalld # setenforce 0 # sed -i "s/^SELINUX=enforcing/SELINUX=disabled/g" /etc/selinux/config # swapoff -a # sed -i 's/.*swap.*/#&/' /etc/fstab

kubernetes高版本,是需要关闭swap分区,不然启动不起来。本篇文章安装版本是1.18.2

四,配置内核参数,并生效

# cat > /etc/sysctl.d/k8s.conf <<EOF net.bridge.bridge-nf-call-ip6tables = 1 net.bridge.bridge-nf-call-iptables = 1 net.ipv4.ip_forward = 1 EOF # sysctl --system

五,配置yum源

1,base,epel换阿里源

# cd /etc/yum.repos.d/ # mv CentOS-Base.repo CentOS-Base_bak # mv epel.repo epel_bak # wget -O /etc/yum.repos.d/CentOS-Base.repo http://mirrors.aliyun.com/repo/Centos-7.repo # wget -O /etc/yum.repos.d/epel.repo http://mirrors.aliyun.com/repo/epel-7.repo

2,配置k8s源

# cat <<EOF > /etc/yum.repos.d/kubernetes.repo [kubernetes] name=Kubernetes baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/ enabled=1 gpgcheck=1 repo_gpgcheck=1 gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg EOF

3,设置docker源

# wget https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo -O /etc/yum.repos.d/docker-ce.repo # yum clean all && yum makecache

六,安装Kubernetes

1,安装docker

# yum install -y docker-ce # systemctl enable docker && systemctl start docker # docker -v Docker version 19.03.8, build afacb8b

2,安装kubelet kubeadm kubectl

# yum install kubelet kubeadm kubectl # systemctl enable kubelet

在这里没有直接安装kubernetes,自带的k8s是1.5版的,太过老旧。通过这种方式安装的是稳定最新版。

在这里要注意,systemctl enable kubelet,不然会面操作时会报warning

[WARNING Service-Kubelet]: kubelet service is not enabled, please run 'systemctl enable kubelet.service'

Kubelet负责与其他节点集群通信,并进行本节点Pod和容器生命周期的管理。Kubeadm是Kubernetes的自动化部署工具,降低了部署难度,提高效率。Kubectl是Kubernetes集群管理工具。

备注:到此步,以上要在所有节点上配置。

七,master(10.0.40.193)节点,配置kubernetes

1,初始化kubernetes集群

[root@bigserver3 ~]# kubeadm init --kubernetes-version=1.18.2 \ > --apiserver-advertise-address=10.0.40.193 \ > --image-repository registry.aliyuncs.com/google_containers \ > --service-cidr=10.1.0.0/16 \ > --pod-network-cidr=10.244.0.0/16 \ > --ignore-preflight-errors=Swap W0427 18:38:50.464858 20657 configset.go:202] WARNING: kubeadm cannot validate component configs for API groups [kubelet.config.k8s.io kubeproxy.config.k8s.io] [init] Using Kubernetes version: v1.18.2 [preflight] Running pre-flight checks [WARNING IsDockerSystemdCheck]: detected "cgroupfs" as the Docker cgroup driver. The recommended driver is "systemd". Please follow the guide at https://kubernetes.io/docs/setup/cri/ [preflight] Pulling images required for setting up a Kubernetes cluster [preflight] This might take a minute or two, depending on the speed of your internet connection [preflight] You can also perform this action in beforehand using 'kubeadm config images pull' [kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env" [kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml" [kubelet-start] Starting the kubelet [certs] Using certificateDir folder "/etc/kubernetes/pki" [certs] Generating "ca" certificate and key [certs] Generating "apiserver" certificate and key [certs] apiserver serving cert is signed for DNS names [bigserver3 kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.1.0.1 10.0.40.193] [certs] Generating "apiserver-kubelet-client" certificate and key [certs] Generating "front-proxy-ca" certificate and key [certs] Generating "front-proxy-client" certificate and key [certs] Generating "etcd/ca" certificate and key [certs] Generating "etcd/server" certificate and key [certs] etcd/server serving cert is signed for DNS names [bigserver3 localhost] and IPs [10.0.40.193 127.0.0.1 ::1] [certs] Generating "etcd/peer" certificate and key [certs] etcd/peer serving cert is signed for DNS names [bigserver3 localhost] and IPs [10.0.40.193 127.0.0.1 ::1] [certs] Generating "etcd/healthcheck-client" certificate and key [certs] Generating "apiserver-etcd-client" certificate and key [certs] Generating "sa" key and public key [kubeconfig] Using kubeconfig folder "/etc/kubernetes" [kubeconfig] Writing "admin.conf" kubeconfig file [kubeconfig] Writing "kubelet.conf" kubeconfig file [kubeconfig] Writing "controller-manager.conf" kubeconfig file [kubeconfig] Writing "scheduler.conf" kubeconfig file [control-plane] Using manifest folder "/etc/kubernetes/manifests" [control-plane] Creating static Pod manifest for "kube-apiserver" [control-plane] Creating static Pod manifest for "kube-controller-manager" W0427 18:39:40.388099 20657 manifests.go:225] the default kube-apiserver authorization-mode is "Node,RBAC"; using "Node,RBAC" [control-plane] Creating static Pod manifest for "kube-scheduler" W0427 18:39:40.390080 20657 manifests.go:225] the default kube-apiserver authorization-mode is "Node,RBAC"; using "Node,RBAC" [etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests" [wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s [apiclient] All control plane components are healthy after 34.525978 seconds [upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace [kubelet] Creating a ConfigMap "kubelet-config-1.18" in namespace kube-system with the configuration for the kubelets in the cluster [upload-certs] Skipping phase. Please see --upload-certs [mark-control-plane] Marking the node bigserver3 as control-plane by adding the label "node-role.kubernetes.io/master=''" [mark-control-plane] Marking the node bigserver3 as control-plane by adding the taints [node-role.kubernetes.io/master:NoSchedule] [bootstrap-token] Using token: 8ylj4t.8kzvk4ro7hgdehwk [bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles [bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to get nodes [bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials [bootstrap-token] configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token [bootstrap-token] configured RBAC rules to allow certificate rotation for all node client certificates in the cluster [bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace [kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key [addons] Applied essential addon: CoreDNS [addons] Applied essential addon: kube-proxy Your Kubernetes control-plane has initialized successfully! To start using your cluster, you need to run the following as a regular user: mkdir -p $HOME/.kube //注意这段要在master节点执行的 sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config sudo chown $(id -u):$(id -g) $HOME/.kube/config You should now deploy a pod network to the cluster. Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at: https://kubernetes.io/docs/concepts/cluster-administration/addons/ Then you can join any number of worker nodes by running the following on each as root: //在worker节点执行以下内容 kubeadm join 10.0.40.193:6443 --token 8ylj4t.8kzvk4ro7hgdehwk \ --discovery-token-ca-cert-hash sha256:46f6cf1d84d0eadb4f6e7f05b908e5572025886d9f134db27f92b98e1c3dd3ed

2,配置kubectl

# echo $HOME /root # mkdir -p $HOME/.kube # cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

3,部署flannel网络

# wget https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml # kubectl apply -f kube-flannel.yml podsecuritypolicy.policy/psp.flannel.unprivileged created clusterrole.rbac.authorization.k8s.io/flannel configured clusterrolebinding.rbac.authorization.k8s.io/flannel unchanged serviceaccount/flannel unchanged configmap/kube-flannel-cfg configured daemonset.apps/kube-flannel-ds-amd64 created daemonset.apps/kube-flannel-ds-arm64 created daemonset.apps/kube-flannel-ds-arm created daemonset.apps/kube-flannel-ds-ppc64le created daemonset.apps/kube-flannel-ds-s390x created

注意:如果有多个网卡,要指定网卡,防止DNS无法解释

args:

- --ip-masq

- --kube-subnet-mgr

- --iface=eth0 #指定网卡

八,worker节点加入,kubernetes集群

[root@bigserver2 yum.repos.d]# kubeadm join 10.0.40.193:6443 --token y4d0ws.w8lxmohfc1o0yq6b \ > --discovery-token-ca-cert-hash sha256:46f6cf1d84d0eadb4f6e7f05b908e5572025886d9f134db27f92b98e1c3dd3ed --ignore-preflight-errors=all W0428 15:07:33.725621 17900 join.go:346] [preflight] WARNING: JoinControlPane.controlPlane settings will be ignored when control-plane flag is not set. [preflight] Running pre-flight checks [WARNING IsDockerSystemdCheck]: detected "cgroupfs" as the Docker cgroup driver. The recommended driver is "systemd". Please follow the guide at https://kubernetes.io/docs/setup/cri/ [preflight] Reading configuration from the cluster... [preflight] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -oyaml' [kubelet-start] Downloading configuration for the kubelet from the "kubelet-config-1.18" ConfigMap in the kube-system namespace [kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml" [kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env" [kubelet-start] Starting the kubelet [kubelet-start] Waiting for the kubelet to perform the TLS Bootstrap... This node has joined the cluster: * Certificate signing request was sent to apiserver and a response was received. * The Kubelet was informed of the new secure connection details. Run 'kubectl get nodes' on the control-plane to see this node join the cluster.

如果报以下错误:

[ERROR DirAvailable--etc-kubernetes-manifests]: /etc/kubernetes/manifests is not empty

[ERROR FileAvailable--etc-kubernetes-kubelet.conf]: /etc/kubernetes/kubelet.conf already exists

[ERROR Port-10250]: Port 10250 is in use

[ERROR FileAvailable--etc-kubernetes-pki-ca.crt]: /etc/kubernetes/pki/ca.crt already exists

解决办法:加上--ignore-preflight-errors=all

九,在master(10.0.40.193)节点,检查集群状态

1,检查集群状态

[root@bigserver3 ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

bigserver2 Ready <none> 4h44m v1.18.2

bigserver3 Ready master 24h v1.18.2

[root@bigserver3 ~]# kubectl get cs

NAME STATUS MESSAGE ERROR

scheduler Healthy ok

controller-manager Healthy ok

etcd-0 Healthy {"health":"true"}

如果报以下错误:

Unable to connect to the server: x509: certificate signed by unknown authority (possibly because of "crypto/rsa: verification error" while trying to verify candidate authority certificate "kubernetes")

解决办法:rm -rf $HOME/.kube,删除目录重新copy

2,创建Pod以验证集群

[root@bigserver3 ~]# kubectl get pod,svc NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE service/kubernetes ClusterIP 10.1.0.1 <none> 443/TCP 21h [root@bigserver3 ~]# kubectl create deployment nginx --image=nginx deployment.apps/nginx created [root@bigserver3 ~]# kubectl expose deployment nginx --port=80 --type=NodePort service/nginx exposed [root@bigserver3 ~]# kubectl get pod,svc NAME READY STATUS RESTARTS AGE pod/nginx-f89759699-545w5 0/1 ContainerCreating 0 12s NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE service/kubernetes ClusterIP 10.1.0.1 <none> 443/TCP 21h service/nginx NodePort 10.1.54.199 <none> 80:30412/TCP 5s

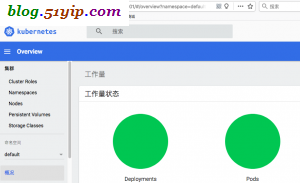

十,安装dashboard,web端的kubernetes管理工具

1,项目地址

https://github.com/kubernetes/dashboard

2,安装kubernetes-dashboard

# wget https://raw.githubusercontent.com/kubernetes/dashboard/v2.0.0/aio/deploy/recommended.yaml # vim ecommended.yaml ports: - port: 443 targetPort: 8443 nodePort: 30001 //新增,范围30000-32767 type: NodePort //新增 selector:

The Service "kubernetes-dashboard" is invalid: spec.ports[0].nodePort: Invalid value: 28443: provided port is not in the valid range. The range of valid ports is 30000-32767,这个问题就是因为端口设置的不对

[root@bigserver3 ~]# kubectl create -f recommended.yaml namespace/kubernetes-dashboard created serviceaccount/kubernetes-dashboard created service/kubernetes-dashboard created secret/kubernetes-dashboard-certs created secret/kubernetes-dashboard-csrf created secret/kubernetes-dashboard-key-holder created configmap/kubernetes-dashboard-settings created role.rbac.authorization.k8s.io/kubernetes-dashboard created clusterrole.rbac.authorization.k8s.io/kubernetes-dashboard created rolebinding.rbac.authorization.k8s.io/kubernetes-dashboard created clusterrolebinding.rbac.authorization.k8s.io/kubernetes-dashboard created deployment.apps/kubernetes-dashboard created service/dashboard-metrics-scraper created deployment.apps/dashboard-metrics-scraper created

3,检查kubernetes-dashboard是否成功

[root@bigserver3 ~]# kubectl get pods -n kube-system -o wide NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES coredns-7ff77c879f-bxgtr 1/1 Running 0 22h 10.244.0.2 bigserver3 <none> <none> coredns-7ff77c879f-t4vm9 1/1 Running 0 22h 10.244.0.3 bigserver3 <none> <none> etcd-bigserver3 1/1 Running 2 22h 10.0.40.193 bigserver3 <none> <none> kube-apiserver-bigserver3 1/1 Running 2 22h 10.0.40.193 bigserver3 <none> <none> kube-controller-manager-bigserver3 1/1 Running 2 22h 10.0.40.193 bigserver3 <none> <none> kube-flannel-ds-amd64-772tm 1/1 Running 0 155m 10.0.40.222 bigserver2 <none> <none> kube-flannel-ds-amd64-d75d5 1/1 Running 0 3h40m 10.0.40.193 bigserver3 <none> <none> kube-proxy-8mpj4 1/1 Running 0 155m 10.0.40.222 bigserver2 <none> <none> kube-proxy-dmsq4 1/1 Running 1 22h 10.0.40.193 bigserver3 <none> <none> kube-scheduler-bigserver3 1/1 Running 1 22h 10.0.40.193 bigserver3 <none> <none> [root@bigserver3 ~]# kubectl get services -n kube-system NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE kube-dns ClusterIP 10.1.0.10 <none> 53/UDP,53/TCP,9153/TCP 22h [root@bigserver3 ~]# netstat -ntlp|grep 30001 tcp 0 0 0.0.0.0:30001 0.0.0.0:* LISTEN 6096/kube-proxy

4,生成Dashboard的认证令牌

[root@bigserver3 ~]# kubectl create serviceaccount dashboard-admin -n kube-system

serviceaccount/dashboard-admin created

[root@bigserver3 ~]# kubectl create clusterrolebinding dashboard-admin --clusterrole=cluster-admin --serviceaccount=kube-system:dashboard-admin

clusterrolebinding.rbac.authorization.k8s.io/dashboard-admin created

[root@bigserver3 ~]# kubectl describe secrets -n kube-system $(kubectl -n kube-system get secret | awk '/dashboard-admin/{print $1}')

Name: dashboard-admin-token-whgv9

Namespace: kube-system

Labels: <none>

Annotations: kubernetes.io/service-account.name: dashboard-admin

kubernetes.io/service-account.uid: dfbb4498-b69f-41f9-84fa-3480b1a4c437

Type: kubernetes.io/service-account-token

Data

====

token: eyJhbGciOiJSUzI1NiIsImtpZCI6IkUtNDZpS3lLbGk5YlhpOFhldTZvcUktOUgxVFk2TkMzN2wwTGlzdlN1aWMifQ.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlLXN5c3RlbSIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJkYXNoYm9hcmQtYWRtaW4tdG9rZW4td2hndjkiLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC5uYW1lIjoiZGFzaGJvYXJkLWFkbWluIiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQudWlkIjoiZGZiYjQ0OTgtYjY5Zi00MWY5LTg0ZmEtMzQ4MGIxYTRjNDM3Iiwic3ViIjoic3lzdGVtOnNlcnZpY2VhY2NvdW50Omt1YmUtc3lzdGVtOmRhc2hib2FyZC1hZG1pbiJ9.oguhHisptOdzxVIfJVY6sRUzFgok-NVy2Ob4tvAy3_h9tIwa-5hzshSwXduDTWtgQ8KO5ry5EphAaa9t3BBdy_KqPO2_ysdD-Hrlfa0Fi-8jep1mk34Ol0kjw6EzmAXT6I09-hLj0yjHAM3ub3cnV2Rc-hHcEZKDs3lVCRLiPeFggMhTOQsPXw3mElVuX3PxghwKRw4c2Kw5Vvg5ALRQ6lcrYY2Kex4hNFo6y1ewszyMyDPXIFIDwupjYbuAmEKb2C7j5QHargSBJ88q-iTcwtAAZs1_gOpXYOJVNVfBsbHAK4O8FeKZnvJhgL4aufNRw12_ffw1Ot4VqR8v43pWow

ca.crt: 1025 bytes

namespace: 11 bytes

5,登录Dashboard。https://10.0.40.193:30001,用firefox访问

转载请注明

作者:海底苍鹰

地址:http://blog.51yip.com/cloud/2399.html